Only 40% of strategic goals are on-track. 81% of assigned owners never update. Here is what ClearPoint analysis of 21,000+ plans reveals about strategy.

Here's a number that should change how you think about strategic planning:

Source: ClearPoint platform analysis of 543,000+ measures across all verticals, May 2026. "Phantom owner" = assigned measure owner with zero updates in 90+ days.

That's the first industry-level breakdown of strategy ownership activity ever published. In higher education, 9 out of 10 people assigned to a strategic KPI never touch it. In financial services — where regulators impose external reporting discipline — 91% of owners stay active.

After 30 years applying the Balanced Scorecard methodology and helping more than 1,000 organizations build strategic plans, I've watched the same pattern from both sides. Working with Bob Kaplan and David Norton, I saw organizations produce brilliant strategy maps and then lose 80%+ of their measure owners to silence within two quarters. ClearPoint now hosts more than 21,000 strategic plans, 500,000+ performance measures, and processes over 2 million updates every month. That dataset has forced me to abandon several things I used to believe about strategic planning.

In 2018, I sat in a quarterly review with a city manager running a metro area of 280,000 residents. She pulled up their strategic plan — 47 goals, 200+ KPIs. Her team had spent six months building it. When I asked how many were being actively updated, the room went quiet. The answer was eleven. That's a 5.5% update rate — worse than the platform average, but not by much. That moment shaped everything we built into ClearPoint's accountability engine.

This guide covers the three findings from our platform data that most contradict conventional strategic planning wisdom — and what the organizations that beat the odds do differently.

Finding #1: Only 40% of strategic goals are on-track — and more planning doesn’t fix it

Let’s start with the number that changed how I think about this work.

Across every strategic plan on the ClearPoint platform — government, healthcare, education, enterprise — 39.85% of strategic goals are rated green (on-track) at any given time. Nearly 19% are explicitly off-track. Another 19% are at risk. And 15% haven't even started.

The instinct when you see numbers like these is to blame the strategy itself. Bad goals. Misaligned priorities. Insufficient analysis. That's the conventional response — and it's wrong.

Here's what the data actually shows: the quality of the upfront strategic planning process has almost no correlation with execution success. Organizations that spend six months on SWOT analysis and stakeholder workshops have roughly the same on-track rates as those that hammer out a plan in a two-day retreat. The variable that actually predicts success is what happens after the plan is written — specifically, how the organization monitors, assigns ownership, and enforces review cadences.

The conventional explanation is a leadership problem. Our data says it's an infrastructure problem. The strategy industry frames execution failure as a discipline deficit — executives don't spend enough time on strategy, don't communicate the "why," don't sustain momentum. We can test that hypothesis directly. ClearPoint platform data shows that organizations with zero automation infrastructure (no API integrations, no scheduled data feeds) have a 92% phantom owner rate — meaning virtually nobody assigned to a KPI actually updates it. Organizations with high automation (2+ API connections and 5+ automated schedules) drop to 70%. Same leaders, same organizational culture, same strategic intent. The only variable is whether the infrastructure makes reporting frictionless or painful.

That's a 22-point gap explained entirely by plumbing, not leadership charisma. You can't fix phantom ownership with more executive attention if you don't have a system that surfaces which owners are phantom in the first place. The industry's prescription is "leaders should spend more time on strategy." Our data says: leaders should spend less time on strategy formulation and redirect that time to building monitoring systems that make execution self-reporting.

Here's the belief I had to abandon: that more KPIs mean better oversight. The top 5% of plans on ClearPoint — the ones with 95%+ evaluation rates — track a median of 947 measures. The bottom 50% track a median of 558. The top performers aren't tracking fewer things. They're tracking more — but they've built the automation infrastructure to keep every measure alive. Their update-to-login ratio is 9:1 (vs. 2.3:1 for the bottom half), meaning data flows in without anyone needing to remember to log in and type a number. Their phantom owner rate is 64% — still high, but 17 points lower than the bottom half's 81%.

The city manager I mentioned earlier — 47 goals, 200+ KPIs — had an 11-out-of-200 active update rate. That's 5.5%. She didn't have a scope problem. She had a plumbing problem: no API feeds, no automated imports, no frictionless update path. Every data point required a human to log in, navigate, and type. Humans don't scale.

What I got wrong about planning: For the first decade of my career, I believed that getting the strategy right was 80% of the battle. Every engagement started with frameworks, facilitation, and a perfectly structured strategy map. The data forced me to reverse that ratio. The top 5% of plans on our platform have a 94.7% evaluation rate — not because they have better strategies, but because they average 9 updates for every login. Their bottom-half counterparts have essentially the same number of strategic initiatives (500 vs. 446) but achieve only a 12.3% evaluation rate. Same strategies, different plumbing, completely different outcomes. Formulation is closer to 20% of the result. The other 80% is the infrastructure that keeps data flowing after the offsite ends.

Finding #2: 81% of strategy owners are ghosts — and that’s the real execution killer

This is the finding that surprised us most.

When we analyzed update patterns across the full ClearPoint dataset — 543,000+ individual measures, spanning every type of organization — we found that 81.1% of people assigned as KPI owners never log a single update. Not "update infrequently." Never. We define a "phantom owner" as anyone assigned to a measure who hasn't recorded a progress update in 90+ days. Across our entire platform, only 18.9% of assigned owners are actively reporting.

This number isn't a ClearPoint problem — it's an organizational accountability problem that ClearPoint makes visible. Before an organization has a system that tracks who's updating and who isn't, phantom ownership is invisible. Leadership assumes the plan is being executed because it was assigned. But assignment without monitoring is indistinguishable from abandonment.

The 7:1 ratio that explains the fix. On ClearPoint, the platform-wide update-to-login ratio is 7.1:1 — meaning for every manual login, seven updates have already been submitted via email, API integration, or automated data imports. Mid-size organizations (11-25 users) hit a peak of 10.6:1 — the sweet spot where teams are large enough to invest in automation but small enough that every member stays engaged. The mechanism is simple: when updating a KPI requires logging in, navigating to the right page, and typing a number, most people won't do it. When data flows in automatically from the systems people already use, phantom ownership drops by 22 points.

What this looks like in practice: The City of Durham, NC went from having no formal strategic plan — and 22 separate Excel spreadsheets with over 100 tabs — to monitoring more than 4,300 performance measures across every department on ClearPoint. They grew from 2 users to nearly 300 trained employees. The transformation eliminated 4 staff positions previously dedicated to manual data compilation and helped Durham earn an ICMA Center for Performance Measurement Certificate of Distinction. As Strategy and Performance Manager Shari Metcalfe put it: "A big turning point was getting a system that we could use to manage all that data. Less time putting numbers in a system and more time actually looking at the numbers and making decisions."

The critical detail: Durham's measure count grew 21% year-over-year (from 3,562 to 4,304 tracked measures) — meaning adoption expanded after initial implementation. That's the opposite of the typical pattern where plans launch big and decay. When reporting is frictionless, people want to track more, not less.

Why the industry spread matters (see the table at the top of this article): the 80-point gap between Higher Education's 89.9% phantom rate and Financial Services' 9.0% isn't about sector culture or leadership quality. It's about whether external forces already impose reporting discipline. Financial services firms file quarterly regulatory reports. Utilities report to public utility commissions. These organizations didn't choose to be disciplined — compliance made updating non-optional. The sectors with the worst phantom rates (education, enterprise) are the ones where nobody outside the organization demands proof of progress. The implication: if you're not in a regulated industry, you have to build the external forcing function yourself — through automated data feeds, scheduled workflows, and visible ownership dashboards. The organizations that treat monitoring as optional will always lose 70-90% of their measure owners to silence.

The phantom owner problem follows a U-curve. We expected organizations with more measures to have significantly lower phantom rates — the "measurement maturity" argument. The data reveals a more nuanced pattern. Phantom ownership drops from 84.7% (small orgs with 1-100 measures) to a sweet spot of 63.6% at the 501-1,000 measure range — a 21-point improvement. But then it rebounds to 76.5% for organizations tracking 1,000+ measures, where the sheer number of assigned owners creates a long tail of non-updaters. The fix isn't more KPIs or fewer KPIs — it's frictionless reporting infrastructure at every scale: automated data feeds, email-based updates, and API integrations that remove the friction from the update process entirely.

The automation fingerprint inside the U-curve. The U-curve raises an obvious question: why does the phantom rate improve from 1-100 measures to 501-1,000, then reverse at 1,000+? We dug into the infrastructure signatures of each bucket. Organizations in the 501-1,000 range average 21 automated schedules and 1.6 API integrations — they've invested in the plumbing that keeps data flowing without human effort. The 1,000+ organizations average 91 schedules and 3.6 API keys — far more automation in absolute terms — but the sheer number of assigned owners (thousands per organization) creates a long tail of people who were given a KPI but never received an automated reporting path. The automation exists at the core; the periphery is still manual. That's the structural explanation: the problem isn't that large organizations are less disciplined. It's that their automation coverage hasn't kept pace with their measurement ambition. Every KPI without an automated data feed is a phantom owner waiting to happen.

Finding #3: The median strategic project takes 11 months — but most organizations plan for 6

This is the timeline delusion.

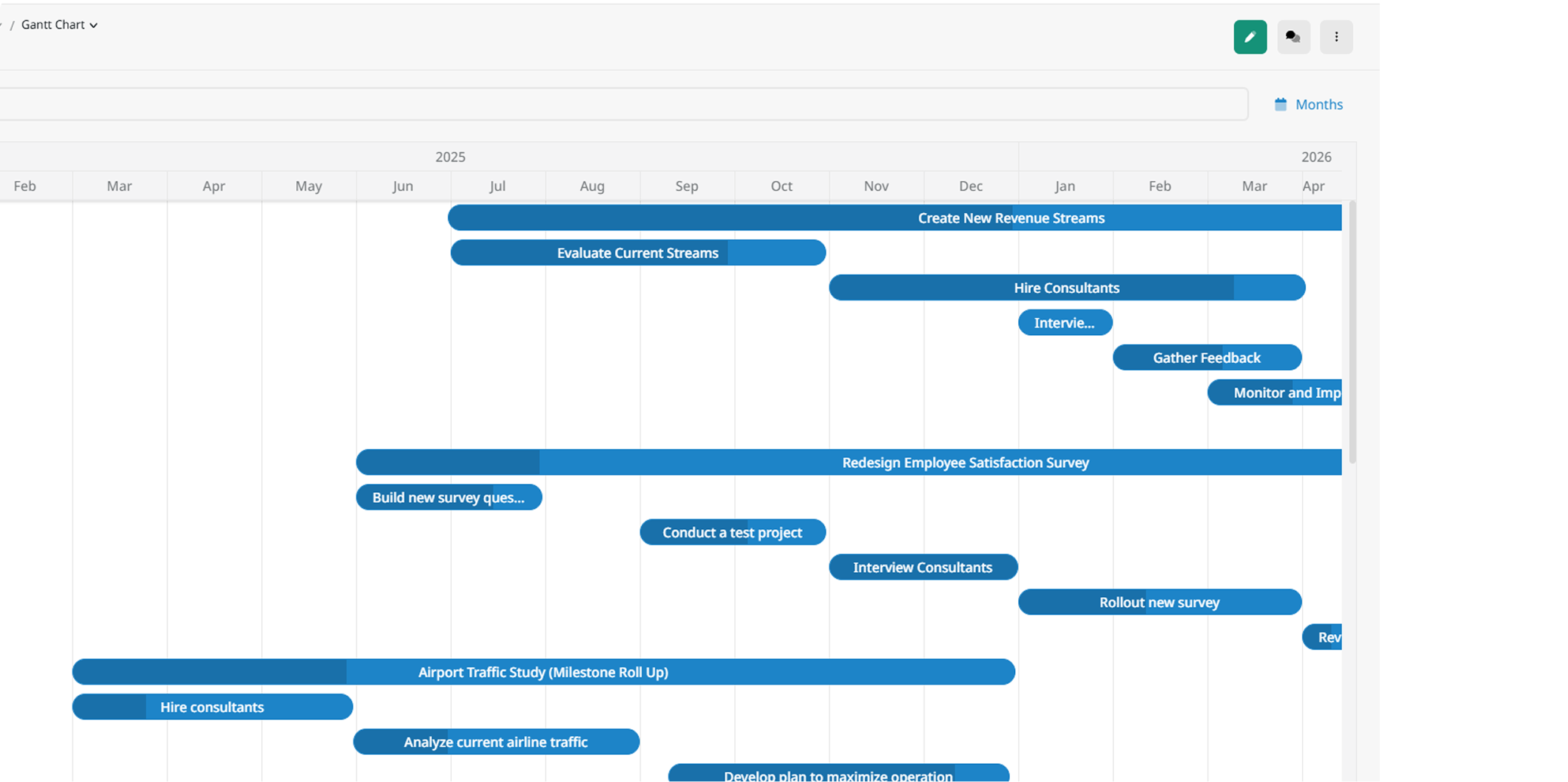

When we measured actual project completion times across 200,000+ active projects and 290,000+ milestones on the ClearPoint platform, the median strategic project took 10.94 months. The average was even longer — 16.74 months, pulled up by complex multi-year initiatives. Yet in our onboarding conversations, most organizations describe their expected timeline as 3-6 months per initiative.

This isn't optimism — it's a structural misunderstanding of how strategic work actually progresses through an organization. Strategic projects aren't software sprints. They require budget cycles, stakeholder alignment, regulatory approvals (especially in government and healthcare), and the kind of cross-departmental coordination that doesn't compress well.

The planning fallacy at organizational scale. When you combine unrealistic timelines with the phantom owner problem, you get the most common failure mode we see: organizations launch 10-15 strategic initiatives simultaneously, expect them all to complete within a fiscal year, assign owners who never report progress, and then wonder at the annual review why nothing moved. The math never worked. The monitoring never existed. The failure was designed in from day one.

What high-performing organizations do differently: They limit concurrent strategic initiatives to 4-6, stagger launch dates based on realistic durations, and build explicit 30% buffer into every timeline. The City of Fort Collins exemplifies this discipline: they structure monthly 40-minute Strategy MAP meetings for each service area and run quarterly comprehensive assessments across their 7 strategic outcomes and 38 top-tier metrics. This cadence — monthly pulse checks plus quarterly deep dives — is the pattern we see across our most successful organizations.

ClearPoint platform data reinforces this: organizations with 60+ active updaters per district — a proxy for embedded review culture — maintain measurably higher update-to-login ratios, meaning their teams report progress proactively rather than reactively. Fort Collins, with 64 active updaters across 34 user accounts (a 188% updater-to-user ratio), exemplifies the pattern. When review cadence is built into organizational rhythm, progress tracking stops being a burden and becomes the default behavior.

What the top 5% of strategic plans do differently

We segmented every active organization on ClearPoint by evaluation rate — the percentage of measures that have a status indicator applied — and compared the top 5% against the bottom 50%. The differences aren't subtle.

Source: ClearPoint Snowflake, April 2026 snapshot. "Top 5%" = organizations at the 95th percentile of evaluation rate. Filtered to active orgs with 5+ users and 1+ measure.

Three patterns stand out. First, the top 5% track more measures, not fewer — median 947 vs. 558. The "simplify your plan" advice is half-right: you should have fewer strategic objectives, but the organizations with the best execution track granular operational metrics underneath those objectives. The depth creates accountability.

Second, the update-to-login ratio gap is the clearest signal. A 9:1 ratio means data flows in automatically — via API feeds, email-based collection, and scheduled imports. A 2.3:1 ratio means every update requires a manual login. That single infrastructure difference explains most of the evaluation gap.

Third — and this surprised us — the top 5% run fewer initiatives (446 vs. 500 for the bottom half). They're tracking more measures but executing fewer projects. That's the discipline of knowing what to measure (everything that matters) versus what to do (only what you can resource properly). Measurement breadth with execution focus. It's the opposite of what most planning guides recommend.

The uncomfortable finding we almost didn't publish: even in the top 5%, the phantom owner rate is 64%. Roughly two-thirds of assigned owners still don't update. The difference is that these organizations have enough automated data feeds and calculated series to keep measures evaluated despite human non-compliance. They've engineered around the human failure mode rather than trying to solve it with training or culture change. That's the most counterintuitive insight in this entire dataset: the best-performing plans succeed not because their people are more disciplined, but because their systems are designed to work even when people aren't.

The strategic planning process that actually works (data-informed, not textbook)

Based on what the data shows, here’s the 5-step process I’d actually recommend — stripped of the generic advice and rebuilt around the failure modes our dataset reveals.

Step 1: Start with your performance baseline, not a SWOT

Most guides tell you to start with a SWOT analysis. Our data suggests that's the wrong starting point.

The organizations on ClearPoint that achieve the highest goal completion rates start with a quantitative performance baseline — not a qualitative brainstorming exercise. Before you ask "what are our strengths?", ask "what are we actually measuring, how often, and who's responsible for each metric?" If you can't answer that question, you don't have a strategy problem — you have a visibility problem.

Working with Kaplan and Norton, I watched organization after organization produce brilliant SWOT analyses that led to beautifully drawn strategy maps — and then fail to execute because nobody had established a measurement baseline before setting targets. Bob used to say that if you can't quantify where you are today, you can't meaningfully define where you want to be tomorrow. A SWOT tells you what you think your strengths are. A performance baseline tells you what the data says they are. I've seen those two disagree more often than they align.

This doesn't mean SWOT is useless — it means SWOT is insufficient. Pair it with a concrete performance inventory: how many KPIs do you currently track? Who owns each one? What percentage are being updated regularly? If the answer is "we don't know," that's your first strategic priority — not vision statements.

The Government Finance Officers Association (GFOA) recommends pairing qualitative assessment with a broader environmental scan covering political, economic, social, technological, legal, and environmental factors. This is especially critical in regulated industries — healthcare organizations navigating CMS requirements, local governments responding to new GASB standards, and universities facing accreditation changes.

Practical tip from 1,000+ implementations: Involve 12-15 stakeholders from varied departments and seniority levels. But do something most facilitators skip — before the first workshop, send each participant a 5-question diagnostic asking about their current measurement practices, reporting frequency, and pain points. The data you collect will tell you more about your strategic readiness than any SWOT quadrant.

Step 2: Set 4-6 objectives maximum — not 15

ClearPoint data shows the average strategic plan tracks 7.2 goals with 6.72 team members involved in execution. But the most effective plans — the ones with the highest on-track percentages — cap at 4 to 6 strategic objectives maximum.

This is counterintuitive. Leadership teams feel they need comprehensive coverage — goals for finance, operations, customer experience, talent, innovation, compliance, and community engagement. The result is a 15-objective plan where everything is "strategic" and nothing gets focused attention.

The data is clear: focus beats comprehensiveness. Every objective beyond six dilutes ownership, fragments attention, and increases the phantom owner rate.

For each objective, define success with measurable KPIs — specific targets with timelines, not directional aspirations. And critically, assign ownership only to people who have explicitly agreed to the reporting cadence. A name on a spreadsheet is not ownership. Ownership means: "I will update this metric by the 15th of every month, and here's what I'll report."

For practical examples, see our guides on vision statement examples and mission statement examples.

Step 3: Build the execution infrastructure before you launch

This is where most strategic planning guides stop — they've given you the strategy, and now it's "time to execute." That handoff is exactly where plans go to die.

Before you announce a single initiative, build three things:

1. A monitoring system. Not a spreadsheet. Not a shared drive with quarterly PowerPoints. A system where every KPI has an owner, every owner has a reporting schedule, and leadership has a real-time dashboard showing what's green, yellow, and red. ClearPoint platform data shows that organizations with structured monitoring maintain significantly higher goal completion rates than those using ad-hoc tracking.

2. A reporting protocol that's frictionless. Remember the 7:1 ratio — you need people to update 7x more often than they log in. That means email-based data collection, API integrations with existing data sources, and automated imports. If reporting requires a 20-minute login-navigate-enter-save workflow, your phantom owner rate will be 90%+.

3. An explicit accountability structure. Carilion Clinic, a Virginia healthcare system with 13,000 employees and 1 million annual patient visits, manages nearly 350 scorecards across four levels: clinic-wide, department, section, and individual provider. They tie 20% of provider compensation to performance scorecard outcomes. As Director of Finance Darren Eversole explained: "If the provider understands what they are being measured on, we see tremendous success."

The most telling detail about Carilion isn't scale — it's refinement. They started with roughly 70 clinic-level measures and deliberately trimmed to 7. Their total measure count went from approximately 2,000 to 1,947 — a counter-intuitive reduction that reflects strategic focus, not abandonment. Less noise, more signal. That discipline — knowing what not to measure — is what separates organizations that use data from organizations that drown in it.

That's accountability by design, not by hope. You don't need to tie compensation to scorecards (though it works). You need ownership that's visible, reported on, and reviewed — every month.

Step 4: Execute with realistic timelines and sequenced initiatives

Given that the median project takes 11 months:

- Limit concurrent initiatives to 4-6. This matches the optimal objective count and prevents resource fragmentation.

- Stagger launch dates by at least 6-8 weeks between initiatives. Simultaneous launches create resource conflicts that doom all of them.

- Build 30% buffer into every timeline. A 12-month project is really a 16-month project. Plan accordingly.

- Connect strategy to budget — the GFOA recommends executing and monitoring tactics through the budget development process. As one practitioner put it during a ClearPoint implementation: "A strategic plan not linked to budget is an unfunded dream."

The City of Durham learned this lesson directly. In their early implementation, staff reverted to Excel because the connection between strategic objectives and daily work wasn't clear. The breakthrough came when they redesigned the process to link every department's business plan to the citywide strategy — and backed it with actual budget connections. The result: an ICMA Center for Performance Measurement Certificate of Distinction and a maintained triple-A bond rating.

Step 5: Review monthly, adapt quarterly, restructure annually

Strategic planning is not a one-time event — it's a continuous monitoring discipline. The Balanced Scorecard Institute affirms this: strategic planning is an ongoing process of adaptation and improvement.

The review cadence that works (proven by data):

The City of Fort Collins structures their reviews as monthly Strategy MAP meetings (40-50 minutes per service area) with quarterly comprehensive assessments of all 7 strategic outcomes and 38 top-tier metrics. They've maintained this cadence for years, keeping initiative drift near zero and maintaining steady progress across all strategic priorities.

What to review in each cycle:

- Monthly (40 min): Goal status traffic light (green/yellow/red), KPI trend direction, blocked initiatives, owner update compliance

- Quarterly (half-day): Full strategic assessment, environmental shifts requiring pivots, initiative reprioritization, phantom owner remediation

- Annually: Strategic plan refresh — revisit 3-5 year horizon, retire completed objectives, launch new cycle

Use a rolling plan process. The GFOA recommends continually evaluating and reassessing vision and strategies — conducting interim reviews every 1-3 years and more comprehensive strategic planning every 5-10 years. Don't wait for the plan to expire to start thinking about the next one.

Strategic planning frameworks: when to use each (and when they break)

There's no single "best" framework — but after seeing how each one performs across 21,000+ plans, I have stronger opinions than most consultants about when each one works and when it doesn't.

FrameworkBest ForKey StrengthWhere It BreaksBalanced ScorecardGovernment, healthcare, large enterpriseConnects financial and non-financial metrics across 4 perspectivesInitial implementation complexity — requires discipline to maintain all four perspectivesOKRsTech, fast-moving organizationsDrives ambitious quarterly goal-settingDangerous for government — quarterly cadence clashes with budget cycles and creates churnHoshin KanriManufacturing, operational excellenceRigorous top-down cascade alignmentRequires organizational discipline that most non-manufacturing orgs lackOGSMCPG, marketing-driven organizationsSimple one-page format, easy executive buy-inOversimplifies complex multi-stakeholder strategies

The Balanced Scorecard remains the most widely used framework across ClearPoint's platform — and there's a reason for that. Developed by Robert Kaplan and David Norton, the BSC forces organizations to balance short-term financial results with the longer-term drivers of sustainable performance across four perspectives: financial, customer, internal processes, and learning & growth.

I spent 15 years working directly with Kaplan and Norton. The thing most people miss about the Balanced Scorecard isn't the four perspectives — it's the discipline of strategy mapping. Bob always said that if you can't draw the cause-and-effect relationships between your objectives, you don't have a strategy — you have a list. After seeing thousands of implementations, I think he was right about the diagnosis but underestimated how hard it is for organizations to maintain that discipline quarter after quarter without a monitoring system that enforces it. That's the gap ClearPoint was built to close.

ClearPoint supports all major frameworks — and many organizations use a hybrid approach. Carilion Clinic built their entire 300-scorecard system on BSC principles while customizing the cascade to fit healthcare's unique provider-level accountability requirements. The City of Coral Springs uses a BSC variant linked directly to budget allocation and maintains a public-facing community dashboard where residents can see how tax dollars connect to strategic outcomes.

Strategic planning by industry: what the benchmarks show

Government & public sector

Government is ClearPoint's largest vertical, with 7,776 strategic plans managing 26,227 active projects. Public sector organizations face challenges that corporate strategy frameworks weren't designed for: multi-year budget cycles, elected leadership transitions every 2-4 years, citizen transparency mandates, and compliance requirements from bodies like GASB and GFOA.

The City of Coral Springs, FL — a Malcolm Baldrige National Quality Award–caliber organization — uses ClearPoint to link their strategic plan directly to budget allocation. As Performance Management Manager Nicole Giordano noted: "A strategic plan not linked to budget is an unfunded dream." Coral Springs publishes performance data on a public community dashboard, proving that transparency and accountability reinforce each other.

For government-specific benchmarks, see our city KPI benchmarks analysis.

Healthcare

Healthcare strategic planning operates under intense regulatory pressure — CMS quality mandates, Joint Commission accreditation, and payer-driven performance incentives all shape strategic priorities. Our healthcare strategic plan data shows that healthcare organizations tracking metrics at the individual provider level see measurably better compliance outcomes than those tracking only at the department level.

Carilion Clinic illustrates this: their 300-scorecard cascade from organization-wide to individual provider, with 20% of compensation tied to scorecard performance, creates accountability that department-level dashboards can't match. They refined their initial 70 clinic-level measures down to 7 — confirming our finding that focus outperforms volume at every organizational level.

Higher education, financial services & utilities

Each vertical brings distinct strategic planning dynamics: higher education faces accreditation cycles (MSCHE, HLC, SACSCOC) that mandate formal strategic planning; financial services navigates evolving regulatory frameworks where strategic pivots are constrained by compliance; and utilities balance infrastructure investment with rate-case constraints and environmental mandates.

ClearPoint serves all five verticals with industry-specific templates and benchmarking capabilities. Explore our sector-specific solutions to see how the platform adapts.

Tools & templates: start executing, not just planning

You don't need to build everything from scratch. But choose tools that address the execution gap — not just the formulation step:

- 15 Free Strategic Planning Templates — covering Balanced Scorecard, strategy maps, and implementation trackers

- Strategy Execution Toolkit — 41-page guide to transforming static goals into a dynamic strategy map

- Strategic Planning Templates (Gated) — 8 frameworks, ready to customize

- 2026 Strategic Planning Report — our annual analysis of 21,000+ plans with industry benchmarks

For step-by-step implementation guides: how to write a strategic plan and how to outline your strategic plan.

And if you're ready to move beyond templates: ClearPoint Strategy is strategic planning software built specifically for execution. We power 21,000+ plans and 2 million monthly updates across government, healthcare, education, and enterprise — because the gap between strategy and results isn't knowledge. It's infrastructure.

Related resources

- The Benefits of Strategic Planning: Why It Matters

- Strategic Planning vs. Operational Planning

- How to Outline Your Strategic Plan: Step-by-Step

- Balanced Scorecard Examples by Industry

- City KPI Benchmarks: What Top-Performing Cities Track

Frequently asked questions

What are the 5 steps of strategic planning?

Based on ClearPoint's analysis of 21,000+ strategic plans, the five most effective steps are: (1) Establish a quantitative performance baseline before setting strategy, (2) Set 4-6 focused strategic objectives maximum, (3) Build execution infrastructure — monitoring systems, frictionless reporting, and explicit accountability — before launching, (4) Execute with realistic timelines (the median strategic project takes 10.94 months) and sequenced initiatives, and (5) Review monthly, adapt quarterly, and restructure annually. The critical difference from textbook advice: most failures happen between steps 2 and 4, where organizations skip execution infrastructure and jump straight from planning to launching.

Why do strategic plans fail?

The #1 reason is the execution gap — and the data proves it. ClearPoint's analysis of 21,000+ plans shows only 39.85% of strategic goals are on-track at any given time. The root cause: 81% of assigned metric owners never update their progress data (we call these "phantom owners," defined as anyone who hasn't recorded an update in 90+ days). Organizations with zero automation infrastructure have a 92% phantom rate; those with API integrations and automated schedules drop to 70%. The fix isn't better strategy — it's execution infrastructure: automated data feeds, frictionless reporting (ClearPoint's 7:1 update-to-login ratio), and visible ownership accountability.

What are the key components of a strategic plan?

A complete strategic plan includes: an executive summary, mission and vision statements, 4-6 focused strategic objectives (our data shows more than six dilutes execution quality), KPIs with assigned owners and defined update cadences, action plans with realistic timelines (budget 30% buffer beyond initial estimates), resource allocation tied to budget, and a monitoring framework with monthly and quarterly review cadences. ClearPoint data shows the average plan tracks 7.2 goals with 6.72 team members — but the most effective plans operate leaner.

What is the difference between strategic planning and operational planning?

Strategic planning sets the 3-5 year direction — it answers "where are we going and why?" Operational planning translates that into quarterly and annual actions — "how do we get there day-to-day?" The critical insight from our data: organizations that connect operational metrics directly to strategic objectives (as ClearPoint enables across 200,000+ active projects) maintain significantly higher goal completion rates than those that treat strategy and operations as separate documents.

What are the 5 C's of strategic planning?

The 5 C's are: Company (internal capabilities), Customers (audience needs), Competitors (market positioning), Collaborators (partners and alliances), and Climate (external economic, regulatory, and technological factors). ClearPoint's platform data suggests adding a sixth: Cadence — how frequently you review and adapt. Our data shows that the review frequency is more predictive of success than the quality of the initial analysis across any of the five C's.

How often should you review your strategic plan?

Monthly pulse checks plus quarterly deep dives. ClearPoint platform data from 11,000+ monthly active users shows that organizations reviewing quarterly maintain higher goal completion rates than annual reviewers. The City of Fort Collins uses monthly 40-minute Strategy MAP meetings per service area, plus quarterly reviews of all 7 strategic outcomes and 38 top-tier metrics. Annual "big bang" reviews — where you dust off the plan once a year — are the single most common trait of organizations with on-track rates below 30%.

What strategic planning framework should I use?

The Balanced Scorecard remains the most widely adopted across ClearPoint's 21,000+ plans, and it's especially strong for government, healthcare, and large enterprises needing to connect financial and non-financial metrics. OKRs work for fast-moving tech organizations but create problems in government (quarterly cadence clashes with budget cycles). Hoshin Kanri suits manufacturing. OGSM works for marketing-driven organizations. The most important choice isn't which framework — it's whether you build execution infrastructure alongside it. A mediocre framework with great monitoring will outperform a perfect framework with no follow-through.

How long should a strategic plan cover?

A strategic plan typically covers 3-5 years, but the document itself should be concise — 10-25 pages depending on complexity. More important than timeframe is focus: ClearPoint data shows the most effective plans limit themselves to 4-6 objectives. Given that the median project takes 10.94 months, a plan with 15 initiatives can't realistically deliver on all of them within a single planning cycle. The GFOA recommends a rolling process — interim reviews every 1-3 years, comprehensive replanning every 5-10 years. Don't wait for the plan to expire.

.svg)